In the past year, we have done a lot of work on performance, and we already had the opportunity to describe some of that work in a previous blog entry. We now feel it is time to provide some numbers, but numbers in themselves are meaningless, unless we provide some context.

Expectations and target goal:

A good place to start is to look at our software stack to get a sense of any limitation: We are relying on a standard set of battle-tested open-source components (Linux, MySQL, Tomcat, Java, Ruby, and all kinds of software libraries…). The best performance numbers we could expect would be with a Tomcat handler that simply returns 200 OK. Such a deployment could achieve 1,000 or even 10,000 requests per second. Now, if the handler needs to keep some persistent state, and each request requires fetching state from a database and writing new state, the numbers could easily be divided by 10, and we would expect results in the 100 to 1,000 requests per second.

Another angle is to look at existing data from the industry:

- Amazon, the largest US eCommerce site, processed about 400 payments/second during black Friday.

- Also, as Pierre mentioned in his previous blog post, typical eCommerce sites usually process a few payments per second at most; a brief calculation shows that a system processing on average 10 payments per second of $10 would already result in $8M per day or over $3B per year.

Based on those numbers (what we expect to achieve and what the industry needs), we understand our target goal is somewhere in the 100 to 1,000 request/sec.

Test scenario:

We decided to test the worst case scenario of a new user making a payment through Kill Bill. We chose to test the raw Kill Bill payment apis (instead of the subscription/invoice apis for instance) because they are easier to test and yet they exercise the whole stack. We configured the system with the Ruby Stripe payment plugin to exercise our JRuby stack, and the analytics plugin which generates additional load on the system by recomputing state (so that data becomes available through KAUI analytics dashboards).

The test creates a new user for each request, which is the worst case scenario where each request translates into creating a new Kill Bill account, creating a new payment method, and then making the payment request. All those operations happen in the context of the Kill Bill stack where the system also generates historical data, audit trails, performs authentication and authorization on each request, allows plugins to keep their own state and of course generates Kill Bill bus events (which for instance are used by the analytics plugin to recompute its state).

Finally, we faked the Stripe request and replaced it with a sleep of 1 second. The goal was to add a long latency to simulate the worst case scenario and avoid overloading the Stripe sandbox and getting banned, which already happened in the past 😉

Hardware:

We decided to run our experiment on AWS. The choice of the hardware was somewhat arbitrary and we decided to go for only one Kill Bill node and a database to reduce the cost and get a sense of what a minimal stack would result in. We chose some decent VMs (16 vCPUs), which definitely provided some power while remaining affordable:

- 1 EC2 instance for Kill Bill (c4.4xlarge, that is 16 vCPUs)

- 1 RDS instance (db.m4.4xlarge that is also 16 vCPU and SSD)

Testbed:

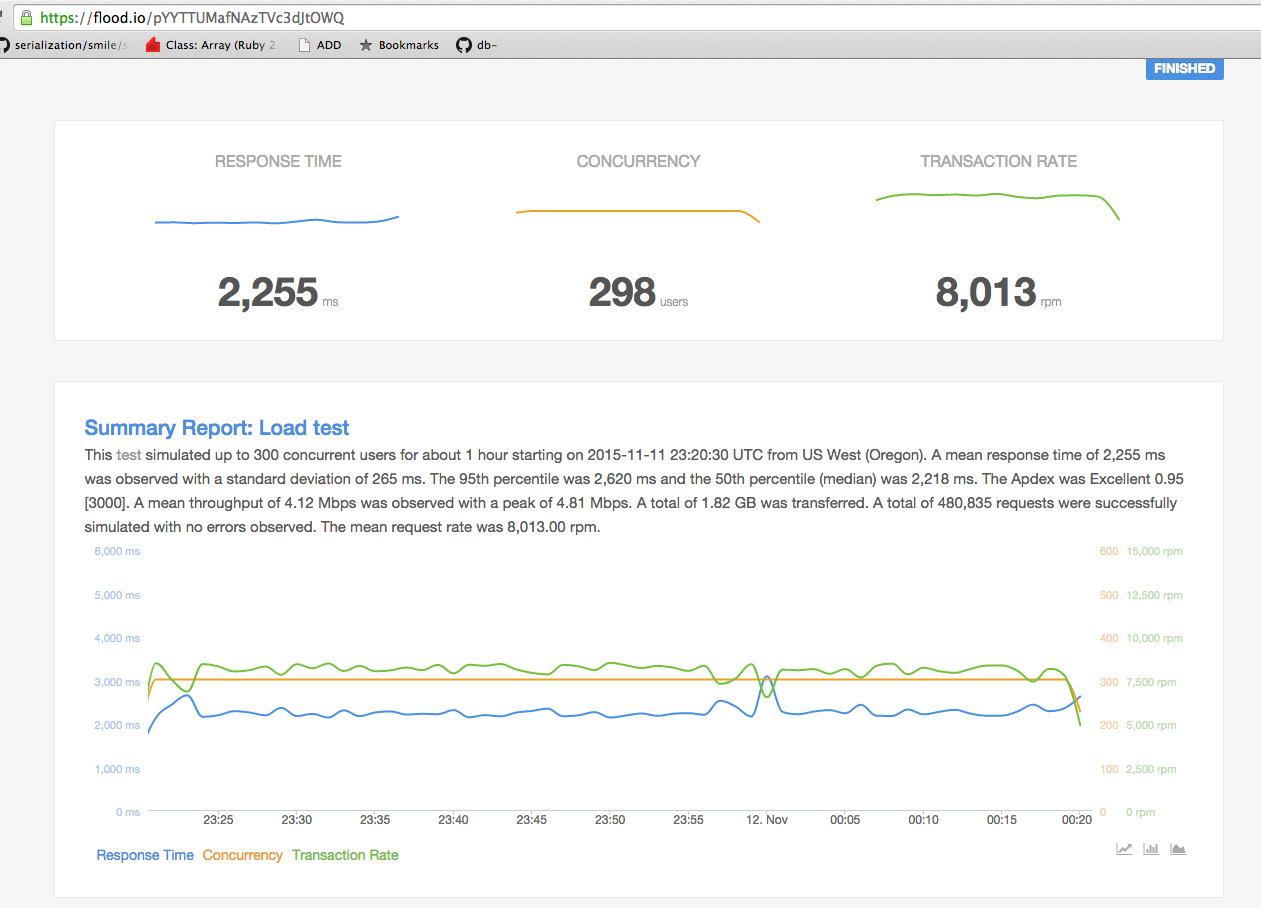

We are relying on flood.io for our testing because it is easy to configure client machines and extract numbers. We used 5 client nodes and configured each of them with a concurrency level of 60 threads; we probably over-provisioned the clients but we wanted to make sure the bottleneck would not be our testbed.

Results:

Now that we are done with context, let’s talk a bit about the test and the results. We let the test run for a full hour. 100% of the requests were successful, and the system was following (i.e. bus events were all delivered on time). The numbers we got were:

- A sustained rate of 133 req/sec

- A tp95 latency of 2.2 sec (with one second in the Stripe sleep call), so tp95 latency of 1.2 sec under load (i.e. 1.2 sec to create an account, add a payment method and trigger a payment). For reference a normal latency when the system is not under high load is about 200 mSec.

The CPU usage of the Kill Bill instance was about 70% (a good chunk was due to the JRuby stack), and the database was only used at 30%. That, in itself is excellent news because while we can easily scale the Kill Bill servers horizontally, it is harder to do so with the database, and so it means we could potentially increase our numbers that way (assuming such a need even matters).

Kill Bill is very modular and extensible (Kill Bill modules, plugins), and it has been designed with a rich set of features (audits, historical data, authentication, authorization, bus events) to fit the need of financial applications. All those come at a cost, and while performance of a billing and payment system is not necessarily the main concern, it is also important to make sure the system will be able to handle load reliably and provide good enough performance metrics to be used by most organizations (e.g: eCommerce).

We have spent a lot of time profiling our system and this resulted in many iterations of improvements all over the stack. To name just a few: caching layers, UUID generation, Kill Bill bus rewrite, Shiro wiring, optimization of database calls, connection pooling, Ruby plugin code, … In addition to these code changes, we also tuned our system carefully (Tomcat configuration, logging configuration, Kill Bill specific configuration,…). While performance and optimization is a constant work, we are quite pleased to offer those numbers on such a feature rich stack but this is by no means the end of our performance journey… Stay tuned!